This year marks four decades since Apple founded its first accessibility office, an initiative to build more adaptable computers that would launch decades of device and operating system tools, including Assistive Access and Personal Voice. As Global Accessibility Awareness Day (May 15) approaches, Apple is leaning into this legacy.

Previewing a slew of new features set to be released throughout this year, the company explained it was ushering in a “new level of accessibility across the Apple ecosystem,” utilizing on-device machine learning and artificial intelligence. This includes brand new App Store, Mac, and Apple Vision Pro updates, accessible device modes, and inter-device compatibility.

“At Apple, accessibility is part of our DNA,” said Apple CEO Tim Cook. “Making technology for everyone is a priority for all of us, and we’re proud of the innovations we’re sharing this year.”

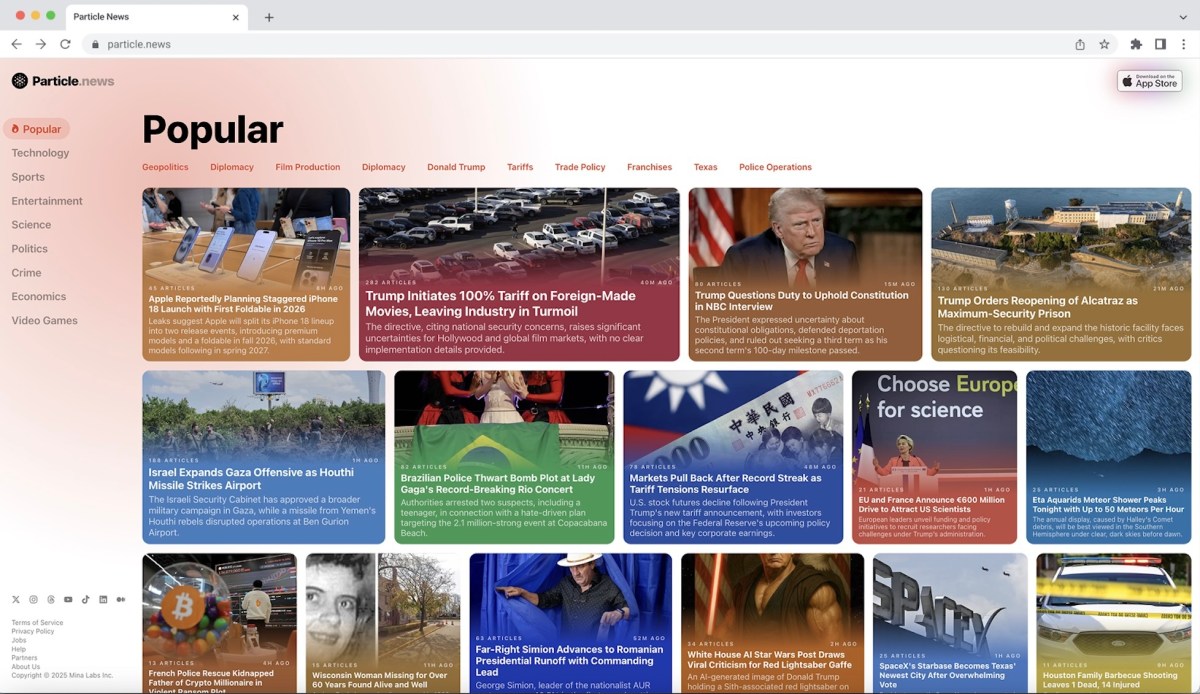

App Store Nutrition Labels

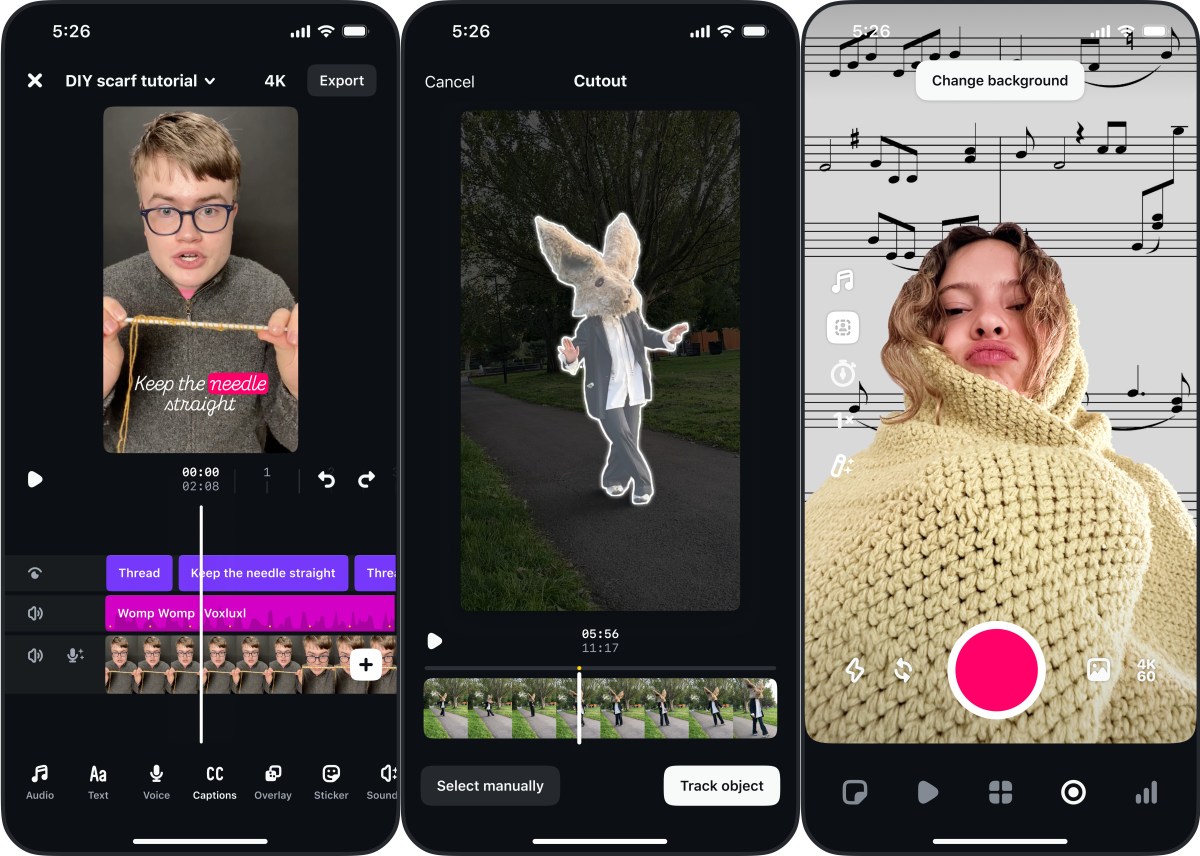

Debuting on the App Store worldwide, and to Apple product listings later this year, the company announced a brand new accessibility tagging system that hopes to make it easier for Apple users with disabilities to find apps and products that fit their needs.

Scroll down on apps and games in the Apple store to find the new Accessibility Nutrition Label, which includes in-depth accessibility information like features and compatibility, as well as external links to developer information (the labels don’t have anything to do with nutrition, they’re just modeled on the iconic design of food packaging labels).

For now, developers will be limited to nine marketplace labels, including VoiceOver, Voice Control, Larger Text, Sufficient Contrast, Reduced Motion, Captions, and Audio Description. Apple’s announcement coincides with wider tagging efforts, including those in the gaming industry, and required nutrition labels for internet service providers.

Mashable Light Speed

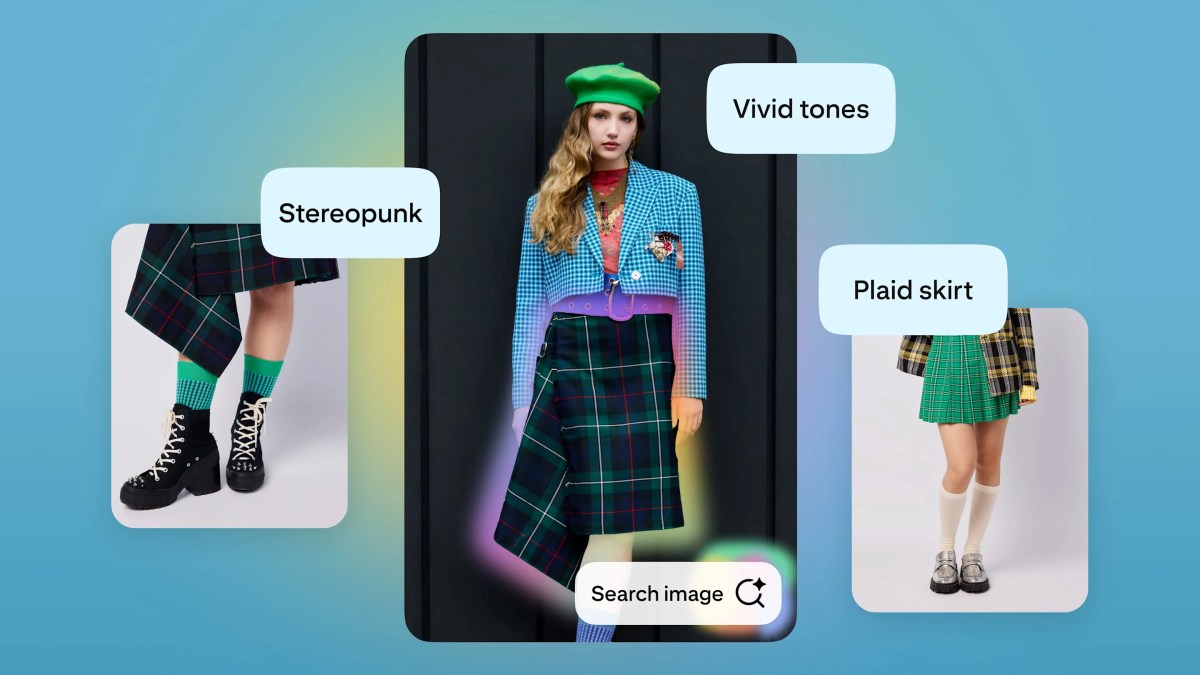

New magnifier features

Apple will finally add its flagship Magnifier tool to Mac, enabling users to activate Magnifier on a connected camera, including iPhones, and view the feed on their computers. Users can then adjust brightness, contrast, color filters, and perspective to make text and images easier to see, live capture views through the camera, and use document view on saved text.

VisionOS updates

Apple Vision Pro will get expanded vision accessibility features via a new visionOS update later this year, too. A more controllable Zoom tool will let users zero in on specific areas of their field of vision, such as a recipe in a cookbook, while keeping the rest of the view the same.

Individuals can also use Live Recognition on Apple Vision Pro to have their surroundings described to them, find objects, and read documents using on-device machine learning. A new main camera API will also open up access to developers of person-to-person assistance apps, such as Be My Eyes — Meta added a similar integration to its Ray-Ban Meta Smart Glasses last year.

Revamped Braille experiences

Apple is launching a new feature they’re calling Braille Access, which turns iPhone, iPad, Mac, and Apple Vision Pro devices into a full-featured braille note taker, the company explains. Using Braille Access, users can launch apps, take notes in braille format, and perform calculations using Nemeth Braille (used for math and science equations). Braille Ready Format (BRF) files can be opened directly in Braille Access, and, paired with Live Caption, the devices can translate live speech directly into Braille.

Credit: Apple

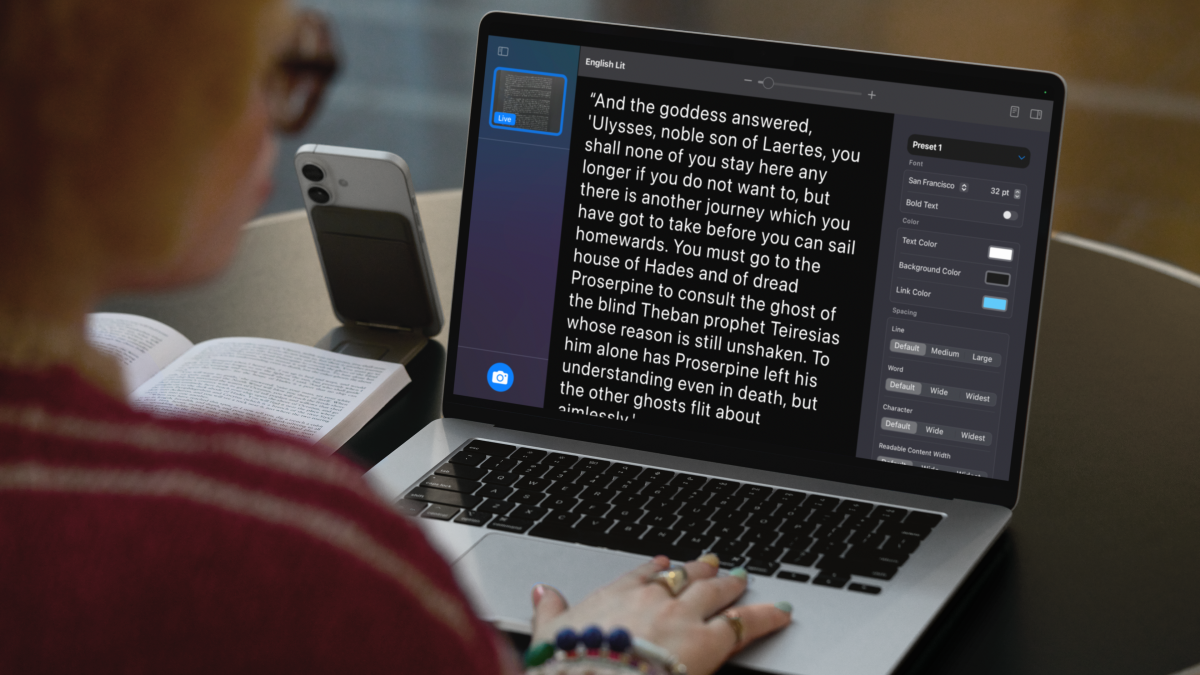

Accessible Reader mode

Accessible Reader takes Apple’s text personalization and applies it systemwide, meaning individuals can customize any text in any app to their preferred reading set-up, like a different font, color, spacing, or Spoken Content tools. The mode is also available in Magnifier, so users can take real-world text, convert and customize it.

Credit: Apple

Expanded motion controls

Apple Watch users will be able to use Live Captions in tandem with Live Listen, a feature that amplifies external sounds and turns your device into a remote microphone. By connecting their iPhone or other listening device to their Apple Watch, users can follow along to Live Captions synced with a device across the room, for example.

Credit: Apple

Credit: Apple

Apple will be revamping its motion-related accessibility tools this year too, including faster eye and head tracking for typing and motion sickness reduction for Mac.

Apple also plans to release expanded language options for AI-powered Live Captions, a new assistive access viewing mode for Apple TV watchers, and a new settings option that lets users share their personalized accessibility selections with other devices in an instant.